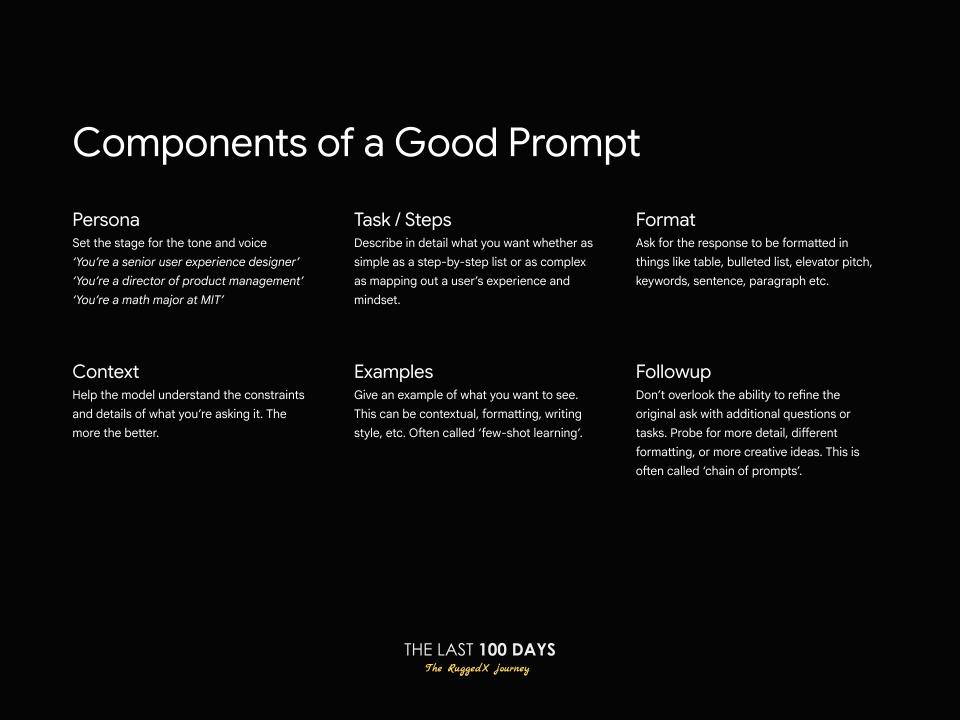

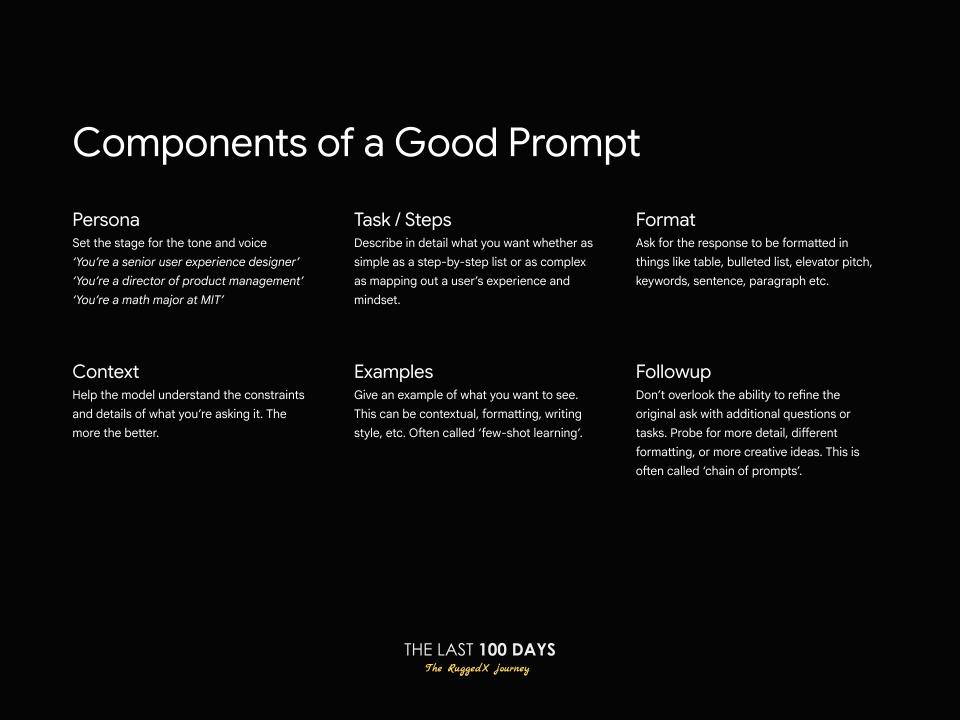

The 6 Components of a Good Prompt

Understand the essential components of a good prompt and how to craft effective instructions for AI models.

Published: Monday, Nov 10 2025

Understand the essential components of a good prompt and how to craft effective instructions for AI models.

Published: Monday, Nov 10 2025

In the world of Generative AI, the models are brilliant, but they are not mind-readers. The difference between a vague, generic answer and a precise, actionable result often comes down to one thing: the quality of your prompt.

A prompt is more than just a question; it's an instruction set. Building an effective instruction set requires understanding its core components. Whether you are generating code, summarizing a document, or creating an AI agent for a trading platform like Neptune, you need structure.

We've broken down the anatomy of a perfect prompt into six essential components—the same structure we use when training our AI decision layers. Mastering these six elements will transform your interactions with any Large Language Model (LLM).

A good prompt should move beyond a simple command and provide the LLM with everything it needs to know about who it is, what it's doing, and how the output should look.

The Persona sets the tone, voice, and expertise of the model's response. Without a persona, the model speaks as a generic helper. With one, it speaks with authority and context.

The Task / Steps component is the core instruction. It must be detailed, linear, and leave no room for ambiguity. Break down complex tasks into a simple, numbered list for the model to follow sequentially.

The Context helps the model understand the boundaries, constraints, and specific details of your request. This often includes timeframes, source material, or necessary background information.

The Format is non-negotiable for developers and content creators. It ensures the output is immediately usable, whether it’s a JSON object for an API or a markdown table for a blog post.

Providing Examples—often called "few-shot learning"—is the single most powerful way to control style, tone, and accuracy. By showing the model exactly what you want to see, you drastically improve the fidelity of the output.

The Followup is the recognition that AI is a conversation, not a single query. Don’t overlook the ability to refine the original ask with additional questions or probes—this is often called the "chain of prompts."

If you treat your prompts like engineering specifications—detailed, constrained, and unambiguous—you will get predictable, high-quality results. Poor prompt quality is the leading cause of "AI hallucination" and irrelevant output.

For your next task, run through the six-component checklist:

Mastering this framework is the real art and science of working with modern LLMs. It shifts your role from simply asking a question to actively directing the intelligence.